Dreamforce had an overload of new announcements and enhanced features that are continuing to emerge. Below are some of my main takeaways and key points of interest going into the new year of 2020.

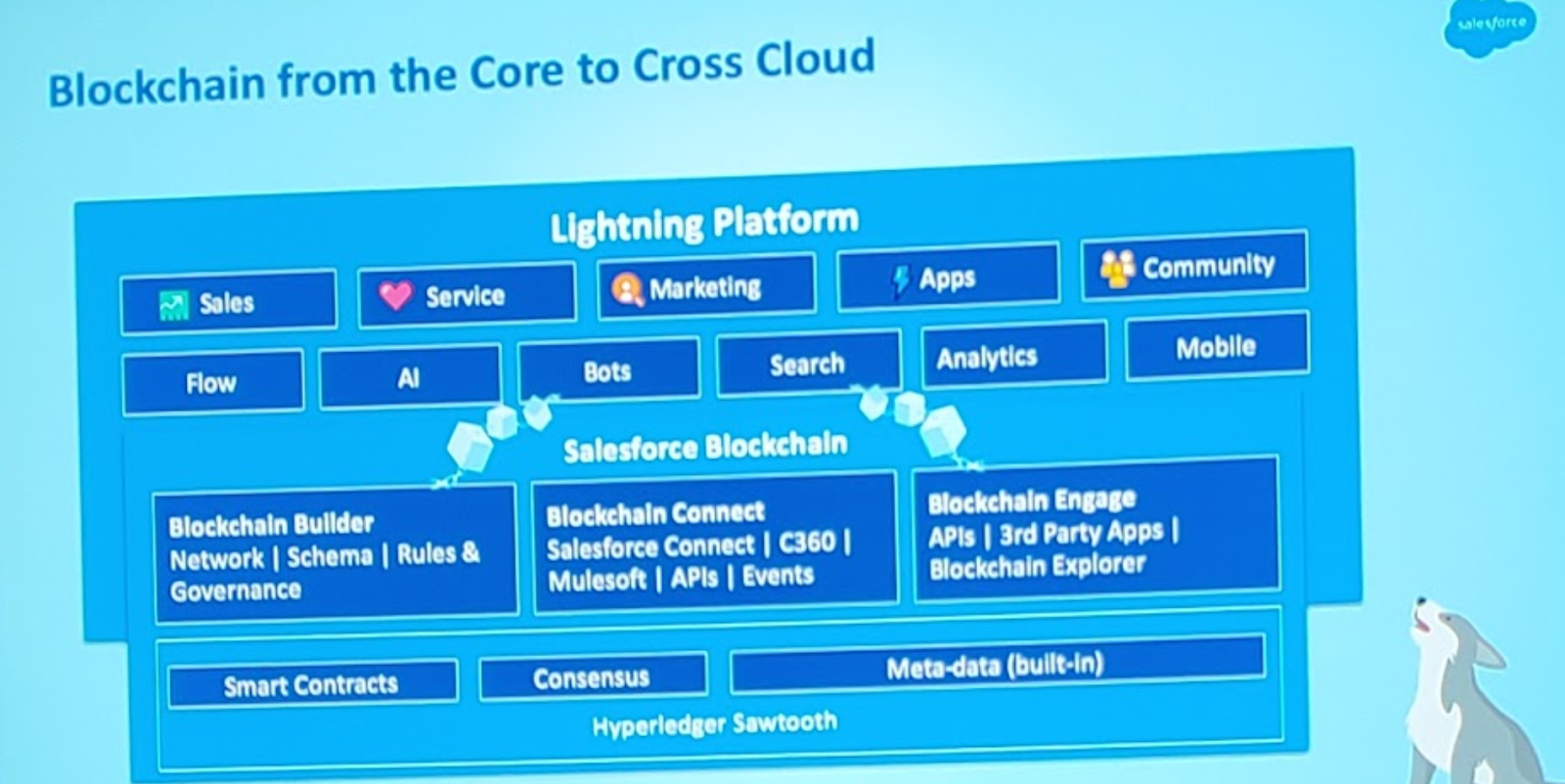

Blockchain

This was announced at Trailhead DX in 2019 but had a big presence at Dreamforce as the feature nears its upcoming general availability in 2020. Blockchain for Salesforce is not complicated, it’s just another type of database that is available for our use when needed and is fully set up in a declarative interface similar to App Builder. Your use case just needs to fall in line with the main provided benefits for it to be a viable option overusing your standard data store.

Reasons to use blockchain:

- An immutable ledger – this can be trusted for auditing purposes as no changes could ever be made to recorded historic events. Each change (record added or updated) is a new block added and cryptographically signed (a new chain link) in a way that all interested parties must agree on the new changes signed to it.

- Shared Data – a blockchain datastore is shared with trusted partners who participate in accessing, approving others’ actions, and writing to the store.

- Decentralized – the datastore is replicated for each interested party and cannot ever mismatch. Since each partner has its own copy of the blockchain there is no need to integrate and couple any partner systems.

I saw two real-life examples of SF blockchain in action. The first was Arizona State University (ASU) use to build a student transcript ledger shared across universities. A lightning component on the student contact record displays each course, # units, and grade stamped to the ledger over a historic timeline. If the student moves to another school, each approved school has immediate access to the ledger to validate course history and transfer credits. Each approved school in the blockchain has the ability to write to the ledger for courses taken at that school. This example highlighted the immediate value of a shared, trusted, and immutable data source.

The second example I saw was for a medical drug company responsible for producing labels for prescription bottles. This was a much more complex use case in which multiple parties are involved in a strict and auditory approval process to get a new label approved by the FDA and produced. This example emphasized blockchain capabilities for a highly regulated process involving multiple independent parties.

Trailhead Blockchain Basics can be found here.

Lightning Performance Testing

I found this gem of a session Lightning Performance Management & Scalability Best Practices (link below) and learned about some very useful but hidden tools built into Salesforce for performance testing Lightning pages, apps, and components.

Experience Page Time (EPT) is a metric for measuring the time it takes for a page to finish loading. Append “?eptvisible=1” parameter to the end of any Lightning Page URL to enable the page load time tools. Once enabled you will see a colored timer measuring the time for all components to load.

There are three main factors to evaluate for optimizing the EPT:

- Page Complexity: Too many components loading on the page simultaneously can cause performance issues. Try to place more components into tabs or accordions for lazy loading. Migrating old Visualforce and even Aura components to Lightning Web Components will improve performance.

- Network latency: How far you are from the physical Salesforce instance as well as your network speed will impact how long it takes for data to get from Salesforce servers to the component view on your browser.

- Browser processing speed: A metric called Octane score can be used to benchmark browser JavaScript performance. Also, Salesforce actually has minimum recommended hardware requirements for the machine running your web browser. For optimal performance they recommend 8GB RAM with 3GB available for Chrome tabs (e.g. call center reps working in Console with many tabs open).

You can use the Salesforce Performance Test to determine network and browser speeds. Navigate to <your domain>.lightning.force.com/speedtest.jsp to launch the Salesforce network speed test from your machine:

The full session video on Lightning Performance Testing can be found here.

Platform Cache

This feature has been available for some time now but it is continuing to evolve. Improve the performance of read-heavy custom applications by caching and saving trips to the database or API. Overall database load can be decreased and end users can see up to 60% faster response time in cached data versus repeating a common database query or complex calculations. It was announced that Salesforce is giving away some cache space, 3MB for AppExchange approved listings and 200MB for qualified partners. I’m hoping to take advantage of this for improving some of Zennify’s listings.

Dive into the details here

Check out the Platform Cache Trailhead

Customer 360 Truth

The Customer 360 platform and the “truth” was a major theme of Dreamforce 2019. But what is it really, other than the next re-invented buzz word like the digital transformation?

- Use of all Salesforce cloud products to create a full experience (Sales, Service, Marketing, Commerce, Community)

- Data Manager – Create a standardized data model and a globally unique identifier for each customer with native connectors and field mappings for Marketing Cloud, Commerce Cloud and other Salesforce instances. Map to one unified view of the customer (Trailhead can be found here)

- MuleSoft – One integration platform to get data from any system to the Salesforce customer profile

- Tableau – Analytics across all data sources and the ability to embed within the customer Lightning page

At the end of the applause what you’re left with is a large suite of tools to choose from. It is up to an expert Partner such as Zennify to provide architectural guidance, and proper implementation to achieve the customer 360 that your business truly needs.

Lightning Full Sandbox

This new feature will allow refresh of a sandbox with all data in minutes, versus many hours in most cases. From what I saw there is still a 29-day refresh interval like normal full sandboxes, so it didn’t seem like that great of a benefit, but you might be able to have more available. There wasn’t much detail and it wasn’t clear when this will be available.

Data Mask

This new security product allows anonymization, pseudonymization, or deletion of fields when refreshing and creating sandboxes with data. There are some third party products out there that do this already, but this will now be built into the platform. This is extremely beneficial for organizations that store sensitive personal or financial data in production and to protect it from being exposed in less secure environments. Data Mask was announced to be available as early as next month.

Learn more about Data Mask here.

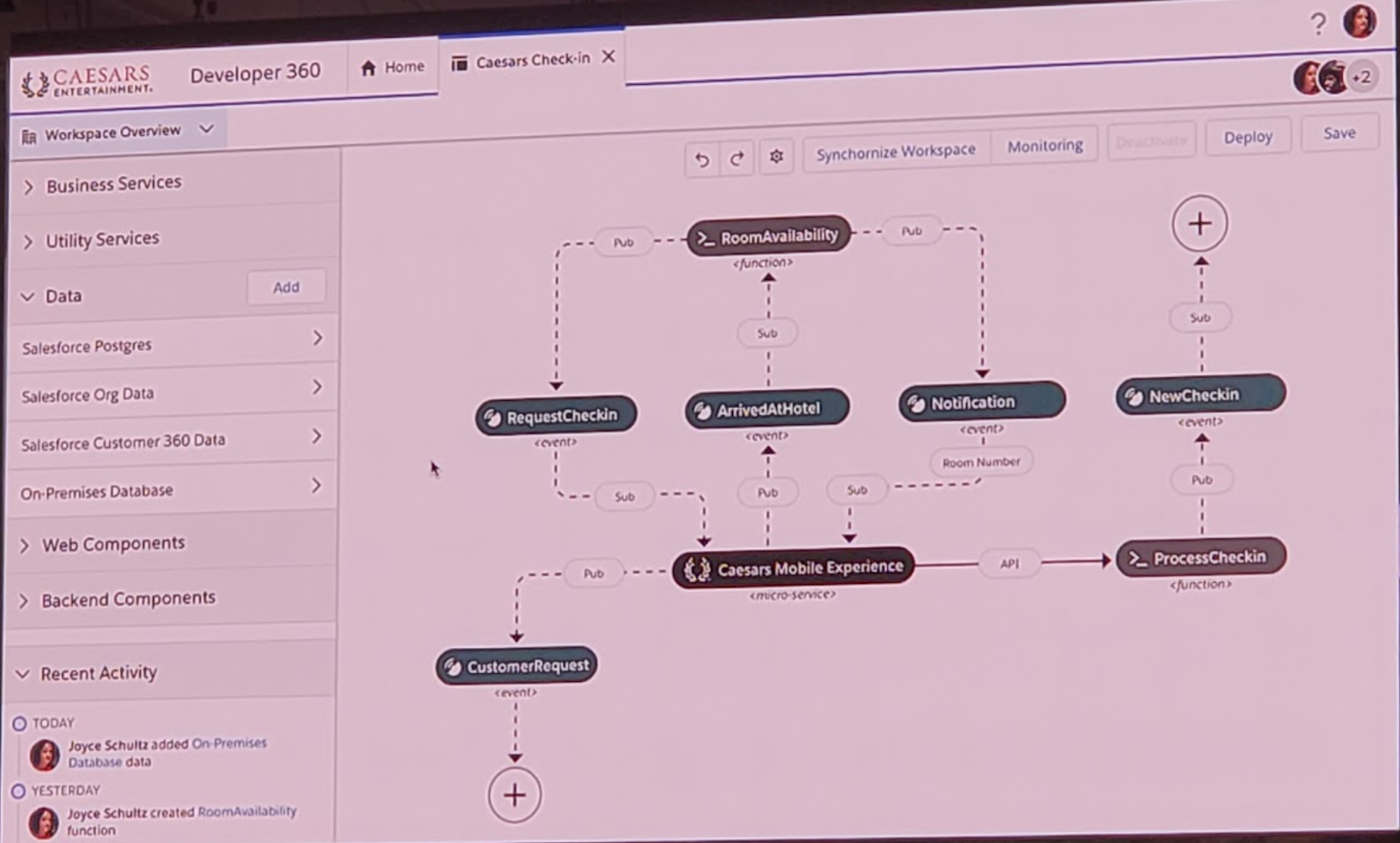

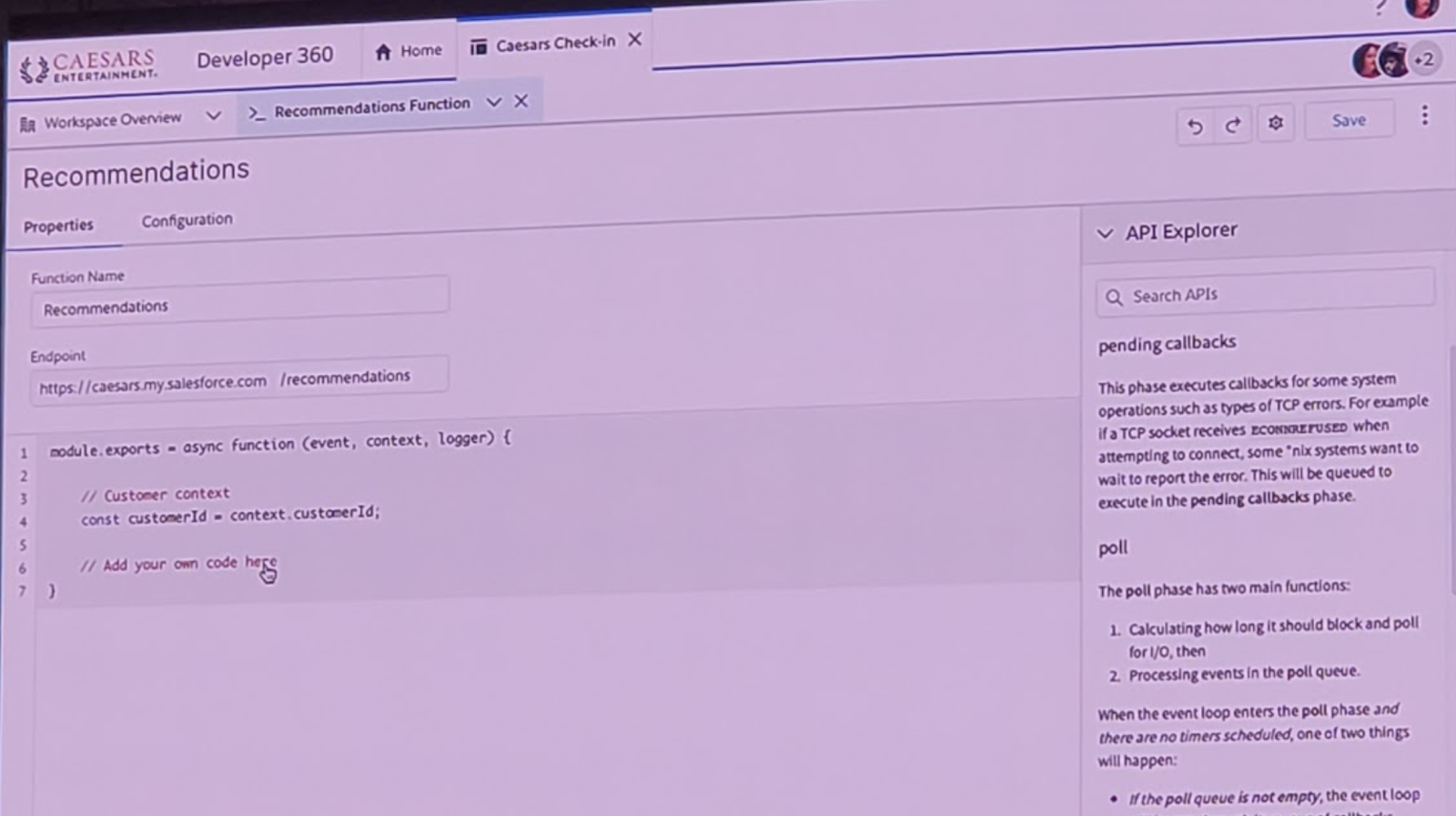

Developer 360

This new feature is very early, appears to be in preview, but looks very appealing. It’s an attempt to unify all Salesforce development tools into one experience and to me looks to be the next generation of Developer Console. In one-page use tabs to switch between flow builder, custom microservices development (a.k.a. Evergreen), manage web components, access available APIs via MuleSoft Exchange, and an event orchestration map. There is also a live Chatter feed of updates from collaborating developers (e.g. “John S. added Account flow”).

Salesforce announced becoming one “Platform” with Heroku using the power of its two platforms and Kubernetes to allow embedded microservices written in languages like Java and Node.js to perform external logic on data or dip into other external services and make use of libraries available to that language — this feature announced as Salesforce Evergreen

Did you know?

- Local development server now available for LWC

- Base LWC components now open source

- Mobile Publisher can publish a branded version of your Salesforce applications (or Communities!) directly to Google Play and Apple App Stores

- For anyone who uses LucidChart for building diagrams, there is a built-in Salesforce connector that can pull in your object and field schema. There is a little “Salesforce Import” link with a cloud at the bottom of the left nav bar (or navigate to File -> Import Data -> Salesforce Schema).